The partnership links sensing, power, and AI computing technologies to help robotics developers move humanoid machines from simulated environments toward safer operation in real-world settings.

Texas Instruments has announced a collaboration with NVIDIA aimed at advancing the development of humanoid robots, a field increasingly described as the frontier of “physical AI.” By combining Texas Instruments’ sensing, control, and power-management technologies with NVIDIA’s AI computing platforms, the companies hope to help robotics developers transition more quickly from simulated prototypes to machines capable of operating safely in real environments.

The effort reflects a broader shift in robotics research, where the challenge is no longer limited to building intelligent software. Developers must also ensure that machines can perceive their surroundings accurately, respond to unpredictable conditions, and interact safely with people and infrastructure. Bridging that gap between digital models and physical deployment has become one of the most complex problems in robotics engineering.

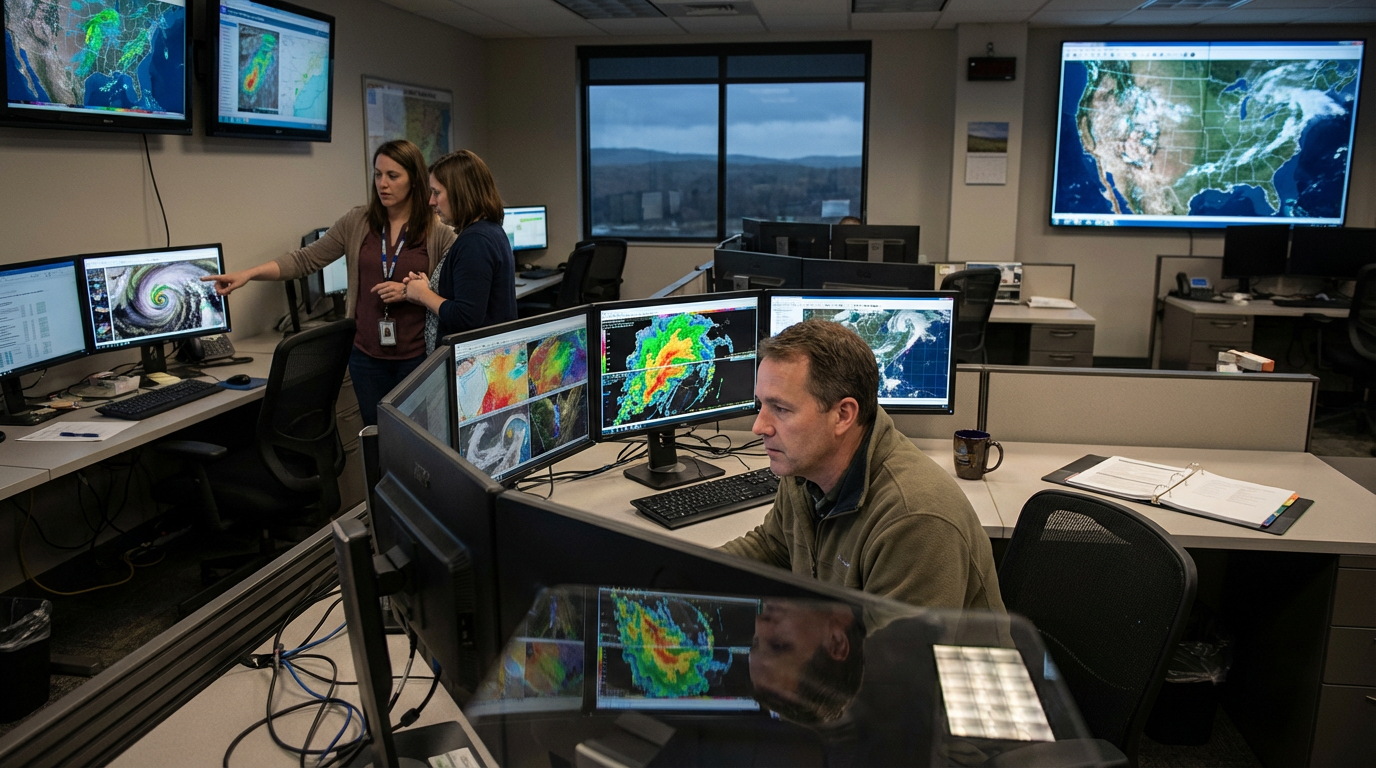

At the center of the collaboration is a system that integrates Texas Instruments’ millimeter-wave radar sensors with NVIDIA’s Jetson Thor computing platform and Holoscan technology. The combination allows robots to process data from multiple sensors—such as cameras and radar—simultaneously, creating a detailed, low-latency view of the environment that can guide movement and decision-making.

Sensor fusion of this kind addresses a practical limitation that has slowed progress in humanoid robotics. Cameras alone can struggle with reflective surfaces, glass, or low-light environments, while radar can detect objects that optical systems miss. Together, the technologies allow machines to navigate complex spaces such as offices, hospitals, or retail environments with greater reliability.

The collaboration also highlights how semiconductor companies are becoming central players in the robotics ecosystem. As AI models grow more complex and machines require more precise control systems, the hardware responsible for sensing, processing, and power management increasingly determines what robots can safely accomplish.

Humanoid robotics has attracted renewed attention in recent years as companies explore applications ranging from logistics and manufacturing to service industries. Yet real-world deployment remains limited, in part because machines must operate in environments built for humans rather than controlled factory floors.

Partnerships like the one between Texas Instruments and NVIDIA suggest an emerging strategy: combining specialized hardware with powerful AI platforms to close the gap between research and practical use. If successful, the approach could help transform humanoid robots from experimental prototypes into systems capable of working alongside people in everyday settings.