As AI workloads surge, LITEON highlights new power and cooling approaches designed to support megawatt-scale infrastructure, signaling a broader shift in how data centers are built and operated.

LITEON Technology used NVIDIA GTC 2026 to present a new generation of data center infrastructure aimed at supporting the rapid growth of artificial intelligence workloads. At the center of its showcase is an 800-volt direct current (800 VDC) power architecture, paired with systems designed for NVIDIA’s Vera Rubin platform, reflecting a shift in how large-scale computing environments are engineered.

The announcement comes as AI models grow increasingly complex, placing unprecedented demands on power delivery and thermal management. Traditional architectures, built for lower-density computing, are struggling to keep pace with the energy and cooling requirements of modern AI clusters, particularly as deployments move toward megawatt-scale operations.

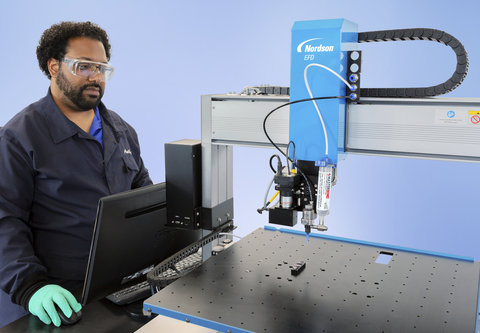

LITEON’s approach emphasizes higher voltage systems that reduce electrical current and improve efficiency across data center environments. By restructuring how power is distributed—moving from component-level solutions to integrated power racks—the company is addressing both energy loss and physical infrastructure constraints that arise in high-performance computing settings.

Alongside power systems, the company highlighted liquid-cooling technologies and rack-level designs intended to manage the heat generated by densely packed AI hardware. These developments point to a broader industry realization: as computing power increases, cooling is no longer a secondary consideration but a central design challenge.

The shift toward integrated power and thermal systems reflects deeper changes in data center architecture. Rather than treating power, cooling, and compute as separate layers, newer designs increasingly merge these elements to improve efficiency, reliability, and scalability in environments where small inefficiencies can translate into significant operational costs.

Collaboration with hardware platforms such as NVIDIA’s MGX and Vera Rubin systems further underscores how tightly coupled infrastructure and compute ecosystems have become. As AI adoption accelerates, companies are not only competing on processing capability but also on how effectively they can deliver and sustain that performance at scale.

What emerges from LITEON’s announcement is less a single product launch than a signal of transition. The evolution toward high-voltage power systems and advanced cooling suggests that the future of AI will depend as much on infrastructure innovation as on advances in algorithms or chips.

In that sense, the next phase of AI development may hinge on rethinking the physical foundations of computing itself. As data centers evolve to meet these demands, efficiency, resilience, and energy management are likely to define the pace and viability of large-scale AI deployment.