A new infrastructure approach from ASUS highlights how rising computational demands are reshaping data center design, with cooling efficiency and scalability emerging as central concerns in AI deployment.

ASUS has introduced a new generation of liquid-cooled AI infrastructure built on NVIDIA’s Vera Rubin platform, signaling how hardware design is adapting to the rapid growth of large-scale artificial intelligence workloads. The announcement reflects a broader shift in how companies are rethinking data centers to support increasingly complex models while managing energy consumption.

At the core of the system is a rack-scale architecture designed to handle intensive computing tasks associated with training and deploying advanced AI models. By relying on liquid cooling rather than traditional air-based methods, ASUS aims to improve energy efficiency and reduce the operational costs that have become a defining challenge for large AI deployments.

This development arrives as demand for computing power continues to escalate, particularly with the rise of models that require vast amounts of data processing. Data centers, once optimized for general-purpose workloads, are now being reconfigured to accommodate specialized hardware, higher power densities, and more sophisticated thermal management strategies.

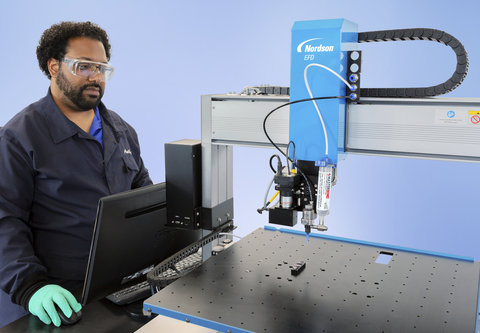

ASUS’s approach extends beyond centralized infrastructure to include edge and enterprise environments, suggesting a more distributed model of AI deployment. Systems designed for desktop-level development, industrial applications, and on-site enterprise use indicate how AI capabilities are being pushed closer to where data is generated, rather than relying solely on large cloud facilities.

The emphasis on flexibility reflects an industry-wide trend toward modular and scalable systems. Organizations are increasingly seeking infrastructure that can evolve with changing workloads, whether that involves training large models, running real-time inference, or supporting autonomous systems that require rapid decision-making.

Energy efficiency has become a particularly urgent concern as AI workloads expand. Liquid cooling systems, which can dissipate heat more effectively than air cooling, are gaining attention as a way to sustain performance without significantly increasing energy usage, especially in high-density computing environments.

ASUS’s announcement also points to the growing importance of integrated ecosystems, where hardware, software, and data management tools are designed to work together. As companies invest more heavily in AI, the ability to deploy, manage, and scale these systems efficiently is becoming as critical as raw computational power.

In this context, the move toward liquid-cooled infrastructure underscores a larger transformation in computing. AI is not only changing what machines can do, but also how the physical systems behind them are built, operated, and optimized for the future.